Hi and welcome once again to the weekly Memia scan across emerging tech and the accelerating future from me, Ben Reid. Thanks for reading!

This week’s newsletter is **HUGE** — there has been **SO MUCH HAPPENING** — so it’s a bit of an impressionistic skim to get across everything … a lot of pictures and video which explain better than words. Strap in, scroll fast. Funnies at the end.

The most clicked link in last week’s newsletter (2nd week running, 3% of openers) was Anthropic’s Claude 3 Prompt Library. (See below for latest adventures with Claude…)

I have a few upcoming speaking engagements, all in Tāmaki Makaurau, maybe see you there:

(Reminder: If you’d like to book me to speak at your conference or in-house event, reach out).

Since last week’s dive-in on future AI chips…

-

Just a few days later… NVidia CEO Jensen Huang launched the new Blackwell platform and GPU architecture at the annual GTC with typical understated showmanship:

“The world’s most powerful chip”

NVidia -

Blackwell in summary:

-

Comprehensive AI platform designed for trillion-parameter scale generative AI.

-

The “GB200 Grace Blackwell Superchip” combines two B200 GPUs and a NVidia Grace CPU for incredible performance and energy efficiency uplift:

“The GB200 NVL72 provides up to a 30x performance increase compared to the same number of NVIDIA H100 Tensor Core GPUs for LLM inference workloads, and reduces cost and energy consumption by up to 25x.“

-

Combined together, a single DGX rack of GB200s exceeds 1 Exaflop compute🤯

NVidia AI scientist Jim Fan crowed:

“Put numbers in perspective: the first DGX that Jensen delivered to OpenAI was 0.17 Petaflops. > GPT-4-1.8T parameters can finish training in 90 days on 2000 Blackwells. > New Moore’s law is born.”

Also announced right at the end of the keynote… Project GR00T (General Robotics 003) , a general-purpose foundation model for humanoid robots (to be led by Jim Fan) and Jetson Thor, a new computer for humanoid robots. Check out the announcement with Jensen Huang plus 9 different humanoid robot models on stage…together with two new Disney Research character robots playing their part. People have really been underestimating how quickly this is going to arrive I think.

(Still processing this acceleration…NVidia is the only scaled player with strategically perfect positioning in this enormous future value chain. No wonder the company is now worth US$2.2 trillion.)

-

The week’s other AI news and releases…

On the topic of humanoid robots… 2 weeks ago in Memia 2024.09 I covered the US$675M investment round in humanoid robot maker Figure led by OpenAI, NVIDIA, Microsoft and others. Then a week ago just as I was clicking send on Memia 2024.10, they released this demo video of Figure animated with speech-to-speech reasoning by GPT. With built-in hesitant conversational style… oh, boy:

In other OpenAI news this week…

-

(Also despite Microsoft’s Copilot team giving us all shivers with this typo below — this was real, I looked it up – it was like this for over 12 hours… was it a prank?!)

-

As usual the guessers are reading the tealeaves which now to indicate that “GPT 4.5” will come out around June… who knows.

-

OpenAI CEO Sam Altman also had a busy week… giving an interview with a group of Korean journalists in which he said (auto-translated from Korean, may be lost in translation but you get the gist):

“Many startups assume that the development of GPT-5 will be slow because they are happier with only a small development (since there are many business opportunities) rather than a major development, but I think it is a big mistake…When this happens, as often happens, it will be ‘steamrolled’ by the next generation model”

He’s also now openly hyping imminent AGI (can you imagine anyone saying this 1 year ago and being taken seriously…?!)

“What I am most excited about from AGI is that the faster we develop AI through scientific discoveries, the faster we will be able to find solutions to power problems by making nuclear fusion power generation a reality…Scientific research through AGI will lead to sustainable economic growth…I think it is almost the only driving force and determining factor”

-

In conversation with Lex Fridman, he clearly now has AGI tunnel vision:

“I think compute is going to be the currency of the future. I think it’ll be maybe the most precious commodity in the world. I expect that by the end of this decade, and possibly somewhat sooner than that, we will have quite capable systems that we look at and say, “Wow, that’s really remarkable.” The road to AGI should be a giant power struggle. I expect that to be the case.“

-

At least he still plays an edgy game on X:

In case you haven’t been keeping up, OpenAI’s GPT-4 model has a worthy new challenger in Anthropic’s Claude 3. I’ve been using it more and more in the last few weeks, particularly for ideation and summarisation tasks and anecdotally have found it to be more useful.

The depths of the model are still being explored. In particular, this thread by @anthrupad (an AI engineer who was also responsible for the legendary Shoggoth cartoon explaining LLMs one year ago) is worth exploring in more detail… Claude is very good at drawing flowchart / decision tree diagrams… which allows humans to probe its thinking processes (eg the compression of all humanity’s thinking on a topic…) in depth. (Click through to the tweet to view the images in higher resolution)

I had a quick go myself… here is Claude 3 Opus playing out a causal loop of scenarios stemming from the “polycrisis”. Not 100% perfect, but pretty mindblowing one-shot stuff from 5 minutes of playing around with a prompt:

The EU Parliament passed the AI Act. The regulatory framework takes a “risk-based approach” defining 4 levels of risk for AI systems:

However Silicon Valley is… unimpressed:

You’ve been hearing a lot from me (and Sam Altman, and others…) about how we can all be really optimistic that AI is going to accelerate scientific discovery beyond anything we’ve ever imagined…

On the other hand:

Yikes.

Clearly the process of scientific peer-review is going to go the same way as academic grading… there is already no way to reliably 100% detect whether new papers have been partly or wholly created by an LLM. And even if there was, so what? Just because the authors used an LLM doesn’t *necessarily* negate the scientific findings…!

(Let’s not even raise the spectre of AI hallucination…)

(Also a reminder that for non-native English speakers using AI is an entirely legitimate strategy to improve the quality of their writing.)

Coincidentally…a paper was released this week on exactly this topic (ironic if it was written and reviewed partly by AI!): Monitoring AI-Modified Content at Scale: A Case Study on the Impact of ChatGPT on AI Conference Peer Reviews:

“We present an approach for estimating the fraction of text in a large corpus which is likely to be substantially modified or produced by a large language model (LLM). Our maximum likelihood model leverages expert-written and AI-generated reference texts to accurately and efficiently examine real-world LLM-use at the corpus level. We apply this approach to a case study of scientific peer review in AI conferences that took place after the release of ChatGPT: ICLR 2024, NeurIPS 2023, CoRL 2023 and EMNLP 2023. Our results suggest that between 6.5% and 16.9% of text submitted as peer reviews to these conferences could have been substantially modified by LLMs, i.e. beyond spell-checking or minor writing updates. The circumstances in which generated text occurs offer insight into user behavior: the estimated fraction of LLM-generated text is higher in reviews which report lower confidence, were submitted close to the deadline, and from reviewers who are less likely to respond to author rebuttals. We also observe corpus-level trends in generated text which may be too subtle to detect at the individual level, and discuss the implications of such trends on peer review. We call for future interdisciplinary work to examine how LLM use is changing our information and knowledge practices.“

I’m not a scientist but I can’t really see a direct solution to this without fundamentally changing the way things are done in scientific publishing: particularly while academic prestige and professional success is measured by the quantity of papers published… it’s just too easy to game. (And academic publishers themselves are having their *rent-taking* business models disrupted by open access…)

My instinct is that the scientific publishing process needs to be completely retooled with AI to counter the risks of using AI. Something along the lines of:

-

AI-generated content detection scans run across all papers before they are published (just to keep the bar higher than zero, eh). Above a certain level, credibility score goes down…

-

More radically: creation of huge scientific AI models which are trained on every scientific paper ever… but with weights based upon some kind of “scientific truth objective function” – (1) conventional reputational factors such as author, institution, citations etc but more importantly (2) the coherence, consistency, verifiability and repeatability of the scientific findings themselves. Likely these massive science AI models will require more reasoning capabilities than just language generation. (Plus they will *need* to be open-source).

-

All new papers would then be benchmarked against the existing scientific AI models for coherence, consistency, novelty, repeatability…. etc.

-

The human-in-the-loop peer review is thus augmented (…if not rendered irrelevant…?) by the automated AI review process.

-

A decentralised peer-review mechanism would add resilience as well. (eg what happened spontaneously for LK-99 last year)

-

The unit of a “scientific paper” itself may well decomposed down to, well, updates to the AI model weights…

-

Academic status thus becomes the weighted average of the Science AI Benchmarks for the papers you’ve published. More meritocratic, at least until someone works out how to game the AI.

Not sure academic institutions know what’s coming…

I told you it’s been a busy week….

-

🍎Apple has entered the chat After CEO Tim Cook recently hinted that Apple was “all in” on AI, Apple AI researchers quietly published a paper on Arxiv detailing their new MM1 multimodal AI model architecture:

-

MM1 is a family of multimodal generative AI models up to 30B parameters

-

Both dense models and mixture-of-experts (MoE) variants

-

Exact scaling law coefficients (to 4 significant figures)

-

Trained on GPT-4V-generated data (implying a deep partnership with OpenAI…)

Industry commentary was pleasantly surprised at the level of openness which Apple has employed here – perhaps a condition of hiring the best AI talent.

I wonder what will be announced at WWDC later this year…? Siri++ ??

-

-

🚨BREAKING: Apple is in talks to build Google’s Gemini AI engine into the iPhone in a potential blockbuster deal (but also in talks with OpenAI).

-

🎮Google Deepmind released SIMA: A generalist AI agent for 3D virtual environments:

“Today, we’re announcing a new milestone – shifting our focus from individual games towards a general, instructable game-playing AI agent…we introduce SIMA, short for Scalable Instructable Multiworld Agent, a generalist AI agent for 3D virtual settings. We partnered with game developers to train SIMA on a variety of video games. This research marks the first time an agent has demonstrated it can understand a broad range of gaming worlds, and follow natural-language instructions to carry out tasks within them, as a human might.

This work isn’t about achieving high game scores. Learning to play even one video game is a technical feat for an AI system, but learning to follow instructions in a variety of game settings could unlock more helpful AI agents for any environment. Our research shows how we can translate the capabilities of advanced AI models into useful, real-world actions through a language interface. We hope that SIMA and other agent research can use video games as sandboxes to better understand how AI systems may become more helpful.“

Deepmind -

⏭️Meta AI: A glimpse at Meta’s GenAI Infrastructure, also heading towards “AGI”:

-

🫤Grok-1 Elon Musk’s AI company XAI released the base model weights and network architecture of Grok-1, their latest large language model under an Apache-2 open source license: 314 billion parameter Mixture-of-Experts model trained from scratch by xAI. (For comparison: OpenAI’s GPT-4, which had 1.76 trillion params at last count).

-

🎭Midjourney release consistent characters, making generative AI storytelling far easier – with creators now able to create consistent characters in images and video. It’s not absolutely 100% (eg beard length below) but pretty spot on:

-

🔍Magnific launched a spectacular new feature for their upscaler: Style Transfer

-

💬Hi, Devin here Last week I covered Devin, the *AI developer*… several more views have filtered out that it’s pretty advanced but REALLY SLOW and involves lots of GPT-4 API calls… also here it is (apparently) reaching out on Slack to ask a human for help:

Ric Burton got into a spot of bother entering Norway accidentally carrying some, er, exotic fungi at the bottom of his bag. Who did he call?

🎩 Kate S for spotting:

As if all that AI in ONE WEEK wasn’t enough…it’s been a HUGE week across the rest of tech as well. Everything is a headline…

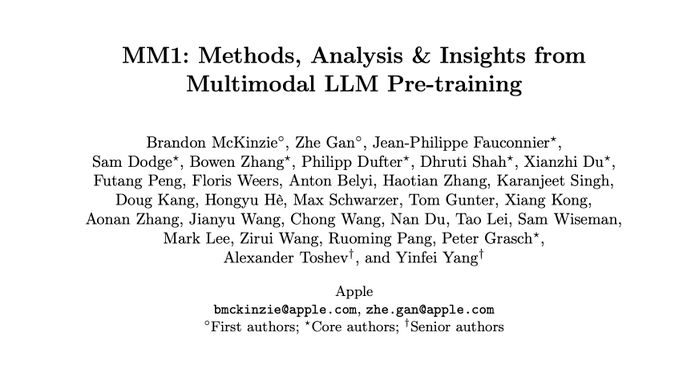

SpaceX’s 3rd launch attempt of its massive Starship rocket achieved orbit.

A reminder of how big it is:

I could watch this spectacular launch video this over and over (just don’t mention the cognitive dissonance of all the CO2 emissions involved…)

The Starship made it into orbit and managed to open the payload door… but then they lost comms / control of the rocket on re-entry. But not before:

“The Starship connected to SpaceX’s own satellite internet network while it was hurtling violently around the Earth so that it could live stream its flight through a plasma field to millions of viewers. Please read the previous sentence again.“

So overall the launch could be considered a success. (Although that didn’t stop some media headlines throwing shade by emphasising the loss..)

Behind it all, the scale of SpaceX’s capability advantage over global competition now looks unassailable:

Expert commentary seems to be that once the Super Heavy booster is reusable, Starship will cost only $30M/launch. That is an order of magnitude cheaper on a $/kg-to-LEO basis than any existing non-SpaceX launch system.

A reminder that in the last month we have seen:

Quite a time to be alive.

IBM put out a bullish updated glossy report on the latest advances in Quantum computing and potential applications… download The Quantum Decade yourself and see if you’re convinced. It says:

“We are entering the era of quantum utility—a point at which quantum computers could serve as scientific tools to tackle problems that classical systems may never be able to solve. At this critical moment, the IBM Institute for Business Value brings you the fourth edition of The Quantum Decade.

This new edition contains experiments underscoring the utility of near-term quantum computers, as well as extensive updates and important information that you—and your organization—need to stay current on this important technology.“

(Generally — as I cover in my book — there is quite some scepticism on the timelines to actually useful quantum computers, but hey…)

Things to do with sunlight hitting the Earth:

-

Harvard has halted its long-planned atmospheric geoengineering experiment:

“The plan for the Harvard experiments was to launch a high-altitude balloon, equipped with propellers and sensors, that could release a few kilograms of calcium carbonate, sulfuric acid or other materials high above the planet. It would then turn around and fly through the plume to measure how widely the particles disperse, how much sunlight they reflect and other variables.“

Too hard politically?

-

Reflect Orbital is a startup with a great strapline: “We sell sunlight after dark”. They are developing a constellation of revolutionary satellites to reflect sunlight down to thousands of terrestrial solar farms after dark. Here’s a rather excitable POC demo from a balloon:

-

More tangibly… MEER (Mirrors for Earth’s Energy Rebalancing) is a noprofit which is aiming to address climate change, particularly this looming problem:

MEER designs and deploys surface solar reflectors, which can be made from recycled materials, in an “economically feasible and environmentally sensitive way”. Locally, they are used for heat adaptation purposes. Globally, the reflectors provide thermal mitigation to partially rebalance Earth’s energy. Basically they put mirrors on rooves. Here’s a project in Freetown, Sierra Leone:

The Logos Network is a new privacy-centric decentralised computing initiative which was formally announced this week, comprising 3 complementary technology components:

“@Waku_org is our information highway.

@Codex_storage is our incorruptible archive.

@Nomos_tech is our participatory parliament. Logos is our line in the sand. We will reclaim our digital commons.”

The technology is driving towards being the operating layer of formative Network States – co-founder Jarrad Hope writes about the vision here: Enclaves In The Net

Also keeping an eye out for this… Daylight Computer is a new startup making a zero-blue-light tablet designed to be used outdoors. Would you prefer this…. or an Apple Vision Pro which hurts your eyes? (Runs Android…. maybe a worthy replacement for my aging Kindle…)

Researchers managed to crack Meta’s Quest VR system:

“In the attack, hackers create an app that injects malicious code into the Meta Quest VR system and then launch a clone of the VR system’s home screen and apps that looks identical to the user’s original screen. Once inside, attackers can see, record, and modify everything the person does with the headset. That includes tracking voice, gestures, keystrokes, browsing activity, and even the user’s social interactions. The attacker can even change the content of a user’s messages to other people.”

(When we get to retina-quality XR… it may be impossible to tell what is real and what is just pixels. There’s an Iain M Banks novel which starts with a character awakening in a completely unfamiliar setting and all they have to hold on to is the word “Simulation” flashing at the edge of their vision..)

Finally…. scientists have discovered a new electroadhesive ‘meat magnet’ which can bond hard metal to soft biological tissue without using any adhesive — and then easily removed when no longer needed.

Our cyborg future?

Once around the world skimming across the top of so much happening…

(It’s been a while since I’ve covered this… remember back at the beginning of Memia in 2021 when the pandemic was top of the agenda in every issue… how times have changed.)

“The origin of severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) is contentious. Most studies have focused on a zoonotic origin, but definitive evidence such as an intermediary animal host is lacking. We used an established risk analysis tool for differentiating natural and unnatural epidemics, the modified Grunow–Finke assessment tool (mGFT) to study the origin of SARS-COV-2. The mGFT scores 11 criteria to provide a likelihood of natural or unnatural origin. Using published literature and publicly available sources of information, we applied the mGFT to the origin of SARS-CoV-2. The mGFT scored 41/60 points (68%), with high inter-rater reliability (100%), indicating a greater likelihood of an unnatural than natural origin of SARS-CoV-2. This risk assessment cannot prove the origin of SARS-CoV-2 but shows that the possibility of a laboratory origin cannot be easily dismissed.“

Methane emissions detection is becoming more accurate:

Meanwhile last week we hit 366 consecutive global Sea Surface Temperature records:

A reminder of what this means: a seminal paper from 2019, Future of the human climate niche found that the world was changing pretty quickly indeed:

“for thousands of years, humans have concentrated in a surprisingly narrow subset of Earth’s available climates, characterized by mean annual temperatures around ∼13 °C.”

Here’s a politely drawn map from that research in 2070 if emissions continue — the highest-emissions, no-policy RCP8.5 scenario. The black areas show 2019 areas with mean annual temperature above >29°C – “uninhabitable” – the shaded areas the projection for 2070.

Redrawing this (somewhat clumsily), Peter Dynes emphasises the uninhabitable areass in black (Absent migration, this area would be home to 3.5 billion people in 2070 following the SSP3 scenario of demographic development.)

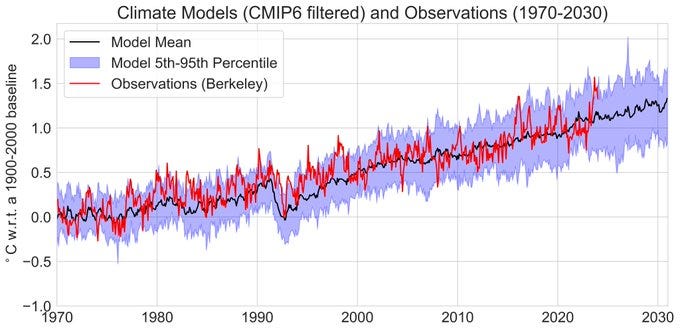

It’s perhaps not all doom….Zeke Hausfather at Berkeley Earth is cautiously sanguine:

“Its a weird bit of whiplash going from arguing with folks claiming models are warming too fast a year ago (no warming since 2016!) to folks arguing that models are warming too slow today. One year does not a trend make, and models are by and large doing ok:“

2024 will tell.

Nonetheless, looking on longer timeframes illustrates the major challenges ahead:

It’s hard to get much information on what’s happening with the Russia-Ukraine war now that it is out of media headlines. Nate Hagens interviewed Chuck Watson last week and I’d recommend his rational, sobering appraisal of the current situation and how it might play out.

Thirteen countries across Africa experienced Internet outages last Thursday due to damage to multiple submarine fiber optic cables somewhere off the West African coast. Some countries only suffered 30min outages -South Africa (five hours) was longer and six countries: Ghana, Nigeria, Benin, Burkina Faso, Cameroon, and Côte d’Ivoire were still suffering from nationwide outages days later.

Cable operator MainOne said that preliminary analysis suggested some form of seismic activity on the seabed resulted in a break to a cable, which is far offshore and at a depth of about 3km at the point of fault – so accidental human activity from ship anchors, fishing, drilling etc has been ruled out.

Ongoing cable cuts in the Red Sea are also impacting overall capacity on the East Coast of Africa. Microsoft said that these incidents together had reduced the total network capacity for most of Africa’s regions.

A reminder of the lack of resilience for internet infrastructure in most countries.

The proposed ban *robust regulation* of TikTok in the US is now awaiting Senate approval, after the House of Representatives passed a bill to force the company to sell its US operations last week.

Tiktok supporters have been mobilised by, er, Tiktok:

And also there’s a fightback in the mainstream US media:

Favourite commentary angle from the refreshingly quirky Kyla Scanlon:

“The TikTok story is interesting from an economic, geopolitical, and social lens but with the app stagnating (growth has flatlined! 9% of users aged 18-24 left last year!) the big question isn’t will TikTok sell… it’s who will replace it“

Not only have I been drinking from the X firehose this week… but also finding some quality entertainment out there too.

Unknown: Cosmic Time Machine is a really watchable documentary on the story of the James Webb Space Telescope (JWST) from initiation through to deployment a million km away from Earth, making it past 344 single points of failure.

Dr Formalyst is at the leading edge of new AI artists:

“a visual artist who explores the edge between figuration and abstraction using generative CGI, code and AI. His work aims to evoke elusive narratives that dwell in the intangible space just beyond the limits of comprehension.”

Their AI video work Almost Human:

“Almost human forms, made in almost human way.

This is an audio/visual project that subverts the concept of human identity in a world where technology is gaining the capacity to uncontrollably change society, culture, and our very species itself. By introducing a non-human creative collaborator, the project aims to provide a fresh and more objective perspective on how our minds and bodies work.”

Here’s the latest shared on X: @dr_formalyst – dancing fingers…very cool.

Their work was exhibited as part of the Weird Sensation Feels Good: The World of ASMR which ran at the Design Museum, London May 2022 – April 2023: check out for updates is scheduled to travel globally during 2024.

Another AI artist of note:

“Niceaunties is a surreal world created by a designer and AI artist based in Singapore. Inspired by the women in her family and the absurdity of ‘auntie culture’, Niceaunties explores the themes of ageing, beauty, personal freedom and everyday life using the mediums of generative and digital art. Niceaunties art is influenced by surrealism, fantasy and kawaii culture.”

The short video Auntlantis which you may have seen on social media is visually gripping:

Many more examples on the NiceAunties Youtube channel.

Top-shelf comedic AI commentary from Cadbury’s marketing team in India:

Finally…

😅Whew, that was a big one this week! 🙏🙏🙏 As always thank you to readers who take the time to get in touch with links and feedback… my email inbox has been a bit full recently so some delays in getting back to you but I aim to respond to every email.

Ciao

Ben